Retinex-guided Channel-grouping based Patch Swap for Arbitrary Style Transfer (under review)

Published:

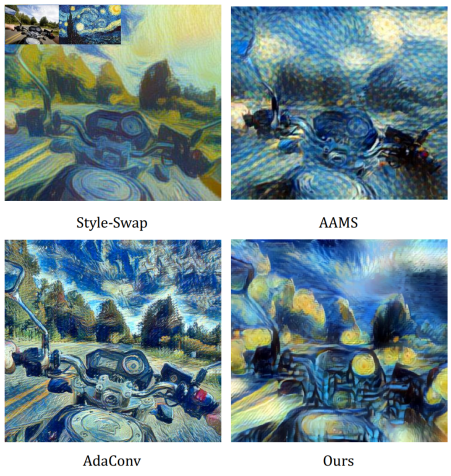

The basic principle of the patch-matching based style transfer is to substitute the patches of the content image feature maps by the closest patches from the style image feature maps. Since the finite features harvested from one single aesthetic style image are inadequate to represent the rich textures of the content natural image, existing techniques treat the full-channel style feature patches as simple signal tensors and create new style feature patches via signal-level fusion. In this paper, we propose a Retinex theory guided, channel-grouping based patch swap technique to group the style feature maps into surface and texture channels, and the new features are created by the combination of these two groups, which can be regarded as a semantic-level fusion of the raw style features. In addition, we provide complementary fusion and multi-scale generation strategy to prevent unexpected black area and over-stylised results respectively. Experimental results demonstrate that the proposed method outperforms the existing techniques in providing more style-consistent textures while keeping the content fidelity.